Information Security

Stay ahead of the threat landscape with expert analysis on Information Security and Cyber Security. The Information Security Stage at #RISK Expo Europe covers deep insights into IT security, strategy, data protection, and the digital aspects of protective security to safeguard your critical infrastructure and data assets.

#RISK Intelligence: Information Security Stage at #RISK Expo Europe

- Opinion

The Human Firewall: Why Your People Are Actually Your Best Security Investment

As human-driven cyber risk continues to dominate breach narratives, blame is proving to be a dead end.

- Opinion

Guardrails, Not Gates: Rethinking the Human Role in Cyber Resilience

As security leaders grow into 2026, the real challenge is no longer technology, but culture.

- Opinion

Is There a “Ghost” in Your Machine?

As AI-enabled identity fraud and remote working converge, trust in who is really behind the keyboard is becoming a critical — and often overlooked — enterprise risk.

- Fact Sheet

#RISK Expo Europe Unites GRC, Cyber, and Regulatory Leaders at Scale

As geopolitical instability and agentic AI redefine the global threat landscape, #RISK Expo Europe returns to ExCeL London on 10–11 November 2026 as the definitive hub for integrated and cross-functional risk.

#RISK Expo Europe 2026 established and still scaling

By year five, repeat attendance, word‑of‑mouth and brand familiarity typically start to play a larger role than launch marketing but we’re not relaxing.

- Previous

- Next

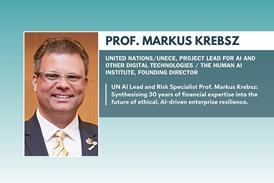

Accountability as a competitive AI differentiator

As the global AI race accelerates, trust — not compute — is emerging as the true source of competitive advantage.

#RISK Expo Europe 2026 is an open, inclusive, community first expo

Breaking down barriers to entry for the global GRC community, #RISK Expo Europe 2026 returns to Excel London on 10 & 11 November. This open, free-to-attend event provides a unique space where practitioners, regulators, and innovators engage on equal terms. Featuring 300+ expert speakers across multiple themed stages and AI-focused workshops, #RISK Expo Europe is the essential gathering point for CEOs, CROs, and CISOs seeking a collaborative, inclusive approach to organisational resilience and integrated risk strategy.

#RISK Expo Europe 2026 established and still scaling

By year five, repeat attendance, word‑of‑mouth and brand familiarity typically start to play a larger role than launch marketing but we’re not relaxing.

Beyond GRC: #RISK Expo Europe 2026 Adds Five Critical New Categories to Meet Evolving Risk Demands

In direct response to feedback from senior decision-makers, the 2026 programme will now feature dedicated streams for Identity & Access Management, Operational Resilience & Business Continuity, Procurement Risk & Supplier Governance, Physical Security & Asset Protection, and Crisis Management & Incident Response, expanding coverage beyond traditional GRC.

Why #RISK Expo Europe 2026 is the Ultimate High-ROI Hub for GRC & Security Vendors

Positioned as a high-return environment for lead generation and brand positioning, #RISK Expo Europe 2026 (Nov 10-11, Excel London) unites a cohort of senior decision-makers with direct purchasing authority. The event offers dedicated stages spanning the entire ecosystem, from Information Security and BFSI to GRC, Protective Security, and Supply Chain resilience.

From Speed Bump to Guardrail: Mitratech’s Henry Umney on the Strategic Evolution of GRC

Mitratech’s Henry Umney sits down with Nick James to trace the shift in GRC from compliance checklists to a unified, tech-enabled function built for enterprise resilience.

The Countdown is On: Why #RISK, Now in its 4th Year, is Your Essential Stop for 2026 Resilience

In a world defined by volatility, complexity, and convergence, the conversation around risk has never been more urgent. Next week, that conversation moves to the Excel London as #RISK returns on November 12th and 13th for its largest and most critical event yet.

Why Integrated Risk Management is the Only Path to Resilience

The era of siloed risk is over…In the dynamic and often chaotic world of global commerce, risk is no longer a number relegated to a technical dashboard—it is the definitive metric that separates market leaders from those who are blindsided. As one 2024 attendee perfectly put it, “RISK is like having your very own experts on speed dial.”

How AI and Root Cause Analysis are Revolutionising Risk Management

In a recent interview, Nick James, CEO of GRC World Forums, spoke with Mark Rushton, CEO of COMET, a company dedicated to eliminating repeat failure.

The AI Revolution: Navigating a New Frontier of Risk and Responsibility

In 2025, the conversation around Artificial Intelligence has moved decisively beyond the hype cycle. AI is no longer a futuristic concept; it is a present reality, reshaping everything from our boardrooms to our battlefields.

AI Investments and Political Uncertainty with Chris Mason

In this episode, Tom Fox speaks with Christopher Mason, who recently launched his risk advisory practice, Woodhorn Global, which focuses on due diligence investigations for investment situations, particularly in emerging markets and other complex, high-risk scenarios. Chris will be a speaker at the upcoming #Risk New York Conference, July 9-10, NYC.

Beyond the Breach: Brand Reputation and Resilience in the Face of Escalating Cyber Threats

The recent headlines surrounding cyberattacks targeting major global brands like Marks & Spencer, Harrods, and other prominent e-commerce platforms serve as a stark reminder: no organization is immune.

Nominations Open! PICCASO Awards Europe 2025 Launches to Celebrate Excellence in Privacy & Data Protection

In an era defined by rapid technological advancement, ever-evolving regulatory landscapes, and a heightened global focus on individual rights, the field of data privacy and protection has never been more critical.

Navigating the Nexus: AI, Regulation, and Resilience – Join #RISK Digital EU/UK on June 3rd

The risk landscape across the United Kingdom and Europe is undergoing a period of intense transformation.

IT Risk Reimagined: Securing the Digital Future in the Age of AI

The digital landscape is undergoing a transformation of unprecedented scale and speed, largely driven by the exponential growth of Artificial Intelligence (AI).

Beyond Protection: The Rise of Data Enablement and the Future of Data Governance

For years, the conversation around data has been dominated by the concept of “data protection.” Regulations like GDPR and CCPA, while crucial for safeguarding individual privacy, have often positioned data as a liability – something to be locked down, controlled, and minimised.

Data Privacy in the Crosshairs: Navigating the Shifting Sands of US Regulation

For US businesses, data privacy is no longer a niche concern; it’s a central strategic imperative. While a comprehensive federal privacy law remains elusive, a complex and rapidly evolving patchwork of state-level regulations, coupled with increasing enforcement actions, is placing data privacy squarely in the crosshairs of legal and compliance teams across the country.

#RISK Digital Global: Your Gateway to Navigating the Interconnected World of Risk

In today’s increasingly complex and interconnected world, risk management has transcended departmental silos to become a critical enterprise-wide imperative.

Cybersecurity in 2025: A Glimpse into the Future with Google and Leading Experts

The digital landscape is in a state of constant evolution, and the cybersecurity threats we face are becoming increasingly sophisticated and unpredictable.

Business as Unusual? – GRC has never been more important

Trump 2.0, Artificial Intelligence, geopolitical conflicts, disruption to business models, regulatory shifts and financial instability.

Nick James, founder and event director of #RISK in conversation with Chris Mason

Christopher is VP of Global Compliance and Investigations at Infortal Worldwide and works with clients entering new markets overseas, taking on new business partners, or making significant investment decisions helping them understand the complete risk profile of the opportunity.

Transforming your code of conduct: Tackling new risks to enhance workplace ethics [Sponsored by LRN]

This webinar is designed for senior compliance and ethics professionals to learn of best practices and strategies to help elevate their ethical corporate cultures that align with stakeholders expectations.

Riding the Waves of Change: #RISK London – New for 2024

Riding the waves of change is not merely about weathering the storm; it is about harnessing the energy and momentum of transformation to propel oneself forward. It involves cultivating a mindset that embraces learning, adapts to new paradigms, and sees challenges as opportunities for growth and innovation.

Risk is not a four-letter word

Successful business leaders understand that taking calculated risks is a necessary component of growth and innovation. But by identifying and mitigating potential risks, businesses can position themselves to capitalise on strategic opportunities and maintain a competitive edge.

Cyber Griffin and #RISK London: A Powerful Partnership for Cyber Resilience

We are delighted to welcome Cyber Griffin on board as partners at #RISK London, taking place October 9 and 10 at London’s ExCel.

Privacy Powerhouse Duo Headline #RISK London as Privacy Theatre Heats Up

#RISK London, the UK’s leading risk focused expo, is thrilled to announce the participation of two leading privacy experts: Sarah Pearce, Partner at Hunton Andrews Kurth LLP, and Bojana Bellamy, President of Hunton Andrews Kurth LLP Centre for Information Policy Leadership (CIPL).

Introducing #RISK London Partners, The Association of Governance, Risk & Compliance

We are delighted to welcome The Association of Governance, Risk & Compliance (AGRC) on board as partners at #RISK London.

Introducing #RISK London Partners, GRC Report

We are delighted to welcome GRC Report on board as partners at #RISK London.

Introducing #RISK London Partners, Cyber Security Unity

We are delighted to welcome Cyber Security Unity on board as partners at #RISK London.

The Association of Governance, Risk and Compliance (AGRC) are partnering with #RISK London

The Association of Governance, Risk and Compliance (AGRC), a global, non-profit organisation dedicated to empowering GRC professionals, is partnering with #RISK London, 9-10 October, ExCel.